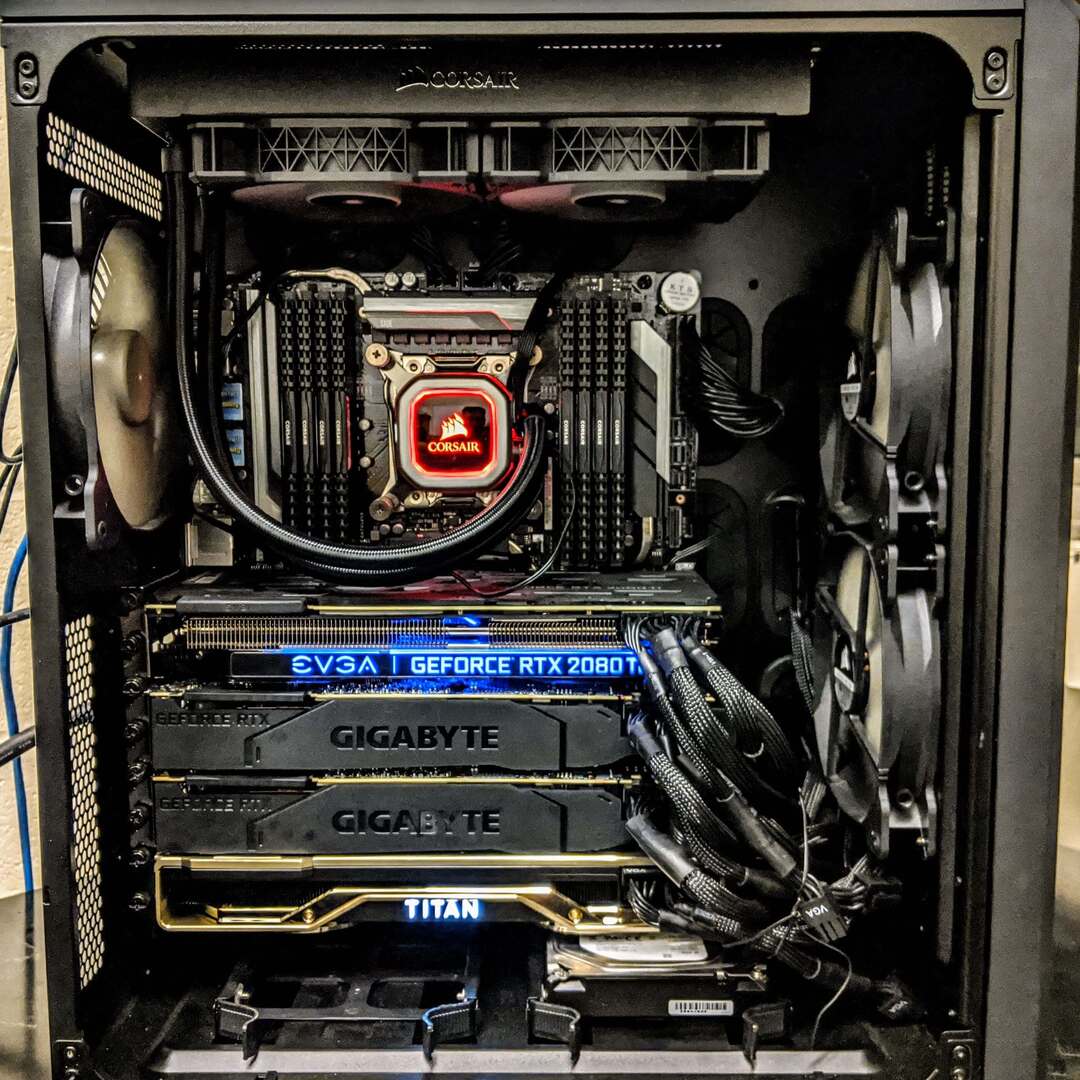

Data passes the following way in order to be loaded efficiently: Despite that, tensor cores need data to perform computations on. Modern GPUs are based on tensor cores that are capable of multiplying 4x4 matrices in one operation which is blazing fast. It makes sense to dig just slightly deeper in a simplified CUDA architecture. This is the reason why we mean "CUDA cards" when talking about the GPU in the ML context. CUDA is a proprietary platform and set of APIs for parallel computations owned by NVIDIA. Most of the popular and production-ready frameworks (TensorFlow, PyTorch, Keras) are designed and optimized for CUDA-enabled devices. Unfortunately, there is a monopoly in the ML framework world. The very first consideration is that we need NVIDIA graphic cards only for deep learning. It's designed to perform computations on big chunks of data (throughput-optimized) in parallel which makes it perfect for model training or inference where it's really needed ( details are here). GPU is the key component of any ML workstation. When it comes to building a deep learning machine (or any custom PC), there are 8 parts that should be considered: It helped to overcome my constant fear that I could buy something that would not work together. It suggests possible components compatibilities and things to check before ordering the list. Here I'm going to mention information that was helpful for me along with details Tim did not focus on.Īlso, PCPartsPicker's Builder was super helpful during planning PC. In his blog, Tim explained at length the importance of each PC part for ML workstation and provides general pieces of advice on choosing them. Which GPU(s) to Get for Deep Learning: My Experience and Advice for Using GPUs in Deep Learning.Particularly, I could highlight two posts from Tim Dettmers: I have found a few useful blog posts which helped me quickly to grasp the topic. While building a deep learning workstation sounds like a similar task, there are nuances that should be accounted for in order to build a cost-efficient and ML-compatible machine. There are a lot of resources on building gaming PCs. So it took me a while to understand what it would cost me to build a custom machine. I have never been interested in custom PC building, hardware or gaming.

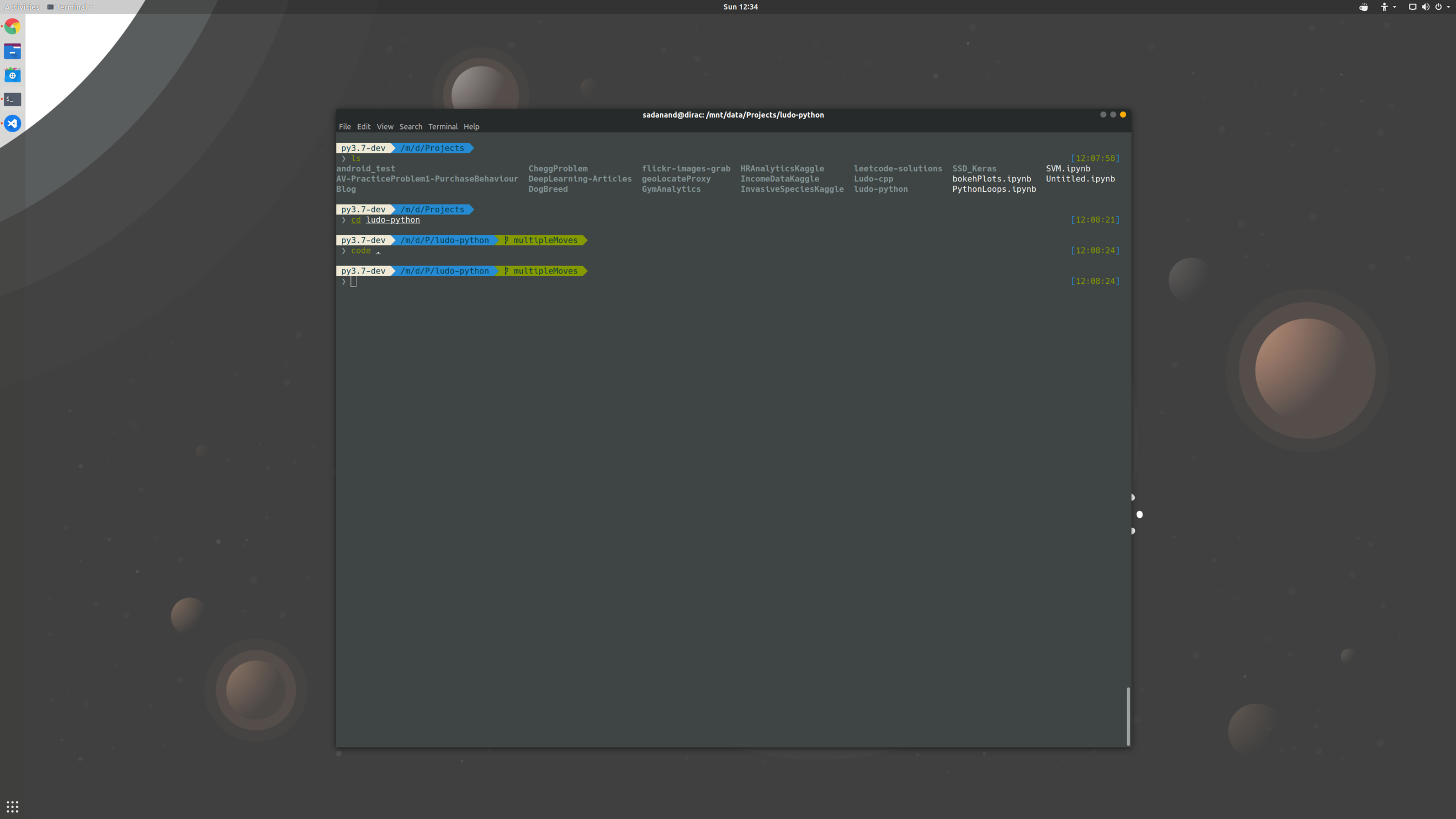

I clearly realized if I wanted to do more complex deep learning experiments and projects, then I just need to have 24/7 access to any kind of GPUs. You have to plan what you are going to experiment with and make those places somehow configurable beforehand.Īll in all, the experience was frustrating. However, PyTorch has outstanding support in academic and other ML-associated communities which means a bunch of ( almost) read-to-use examples of state-of-art technics.Īlso, when you run your code in the cloud, you can not easily experiment. It was time-consuming to even perform a dev run of my recent changes. PyTorch is much slower on CPU than TensorFlow or Keras. While working on the competition, I switched from Keras to PyTorch. Several mornings in a row I started my day dealing with endless amount of new issues that had arisen all of the sudden. I think it was related to dependency version mismatch and I spotted errors like "method X is not found in package Y", etc. Kaggle notebooks randomly threw weird errors after installing project packages. This approach turns out to have underwater stones. The notebook was calling classes and functions from the package. These installable packages I was uploading into a private Kaggle dataset which in turn was mounted to a notebook. I used poetry as a package manager and decided to generate an installable package every time I made meaningful changes to the project in order to test them in the cloud. What could go wrong? Life Without GPUĪs it turned out, there are a lot of issues I encountered in the mentioned workflow.įirst of all, my solution source code quickly became an entire project with a lot of source code and dependencies. I thought I would be able to prototype locally and then execute notebooks on the cloud GPU. ~30h GPU and/or ~30h TPU hours per week on Kaggle Kernels.MacBook Pro 2019 (Intel Core i9 & Intel UHD Graphics 630 1536MB & 16GB DDR4).16bits variant of the dataset holds 350Gb. HPA dataset contains nearly 150Gb of 8bits 4-channels protein images. I was taking part in Human Protein Atlas (HPA) - Single Cell Classification competition on Kaggle. When you start to approach problems that are akin to real-life ones and you see hundreds of gigabytes of large datasets, your gut feeling starts to tell you that your CPU or AMD GPU devices are not going to be enough to do meaningful things. There are always some "buts" that make our lives harder. I would drop my mic at this point if this article was not about building a custom ML workstation. Kaggle Kernels and Google Colab are great.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed